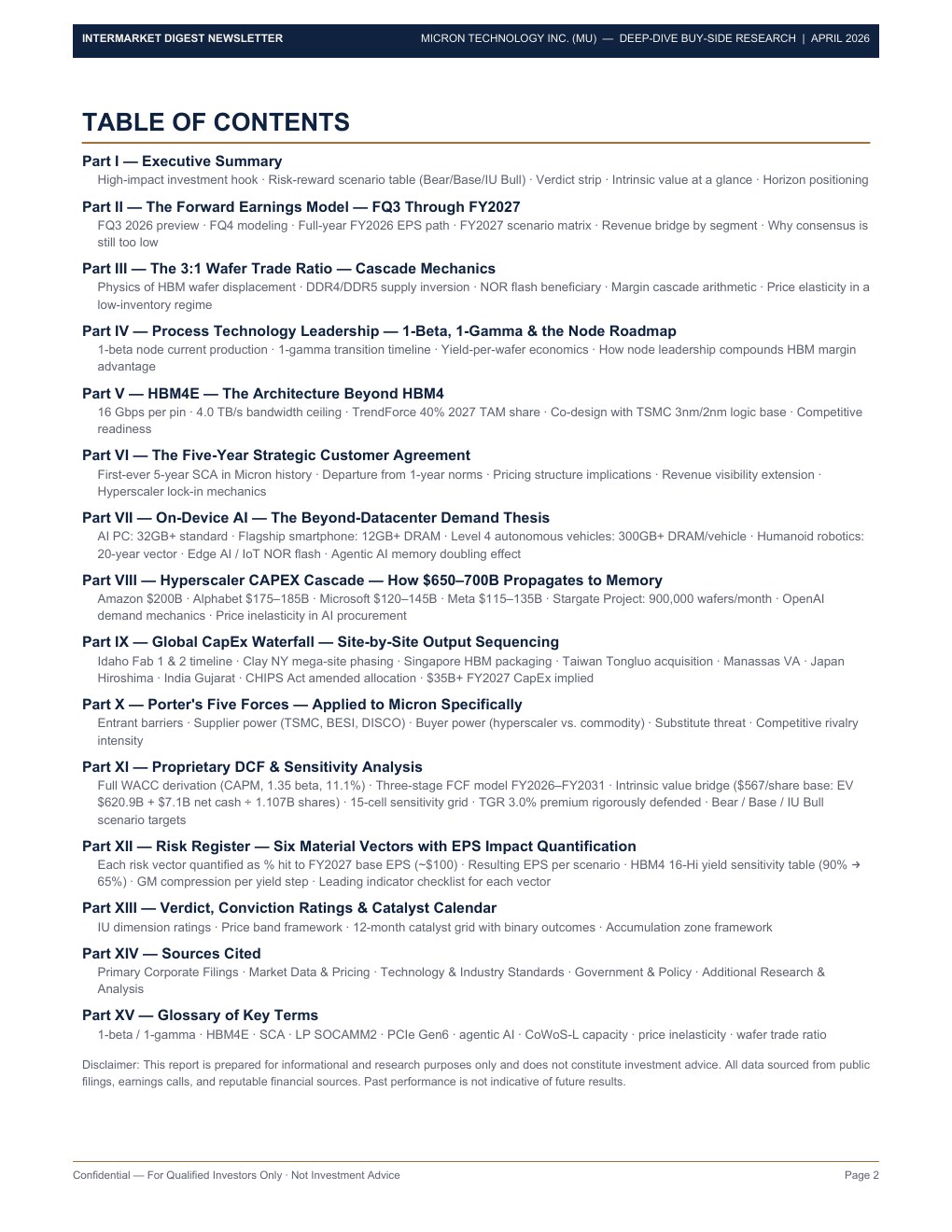

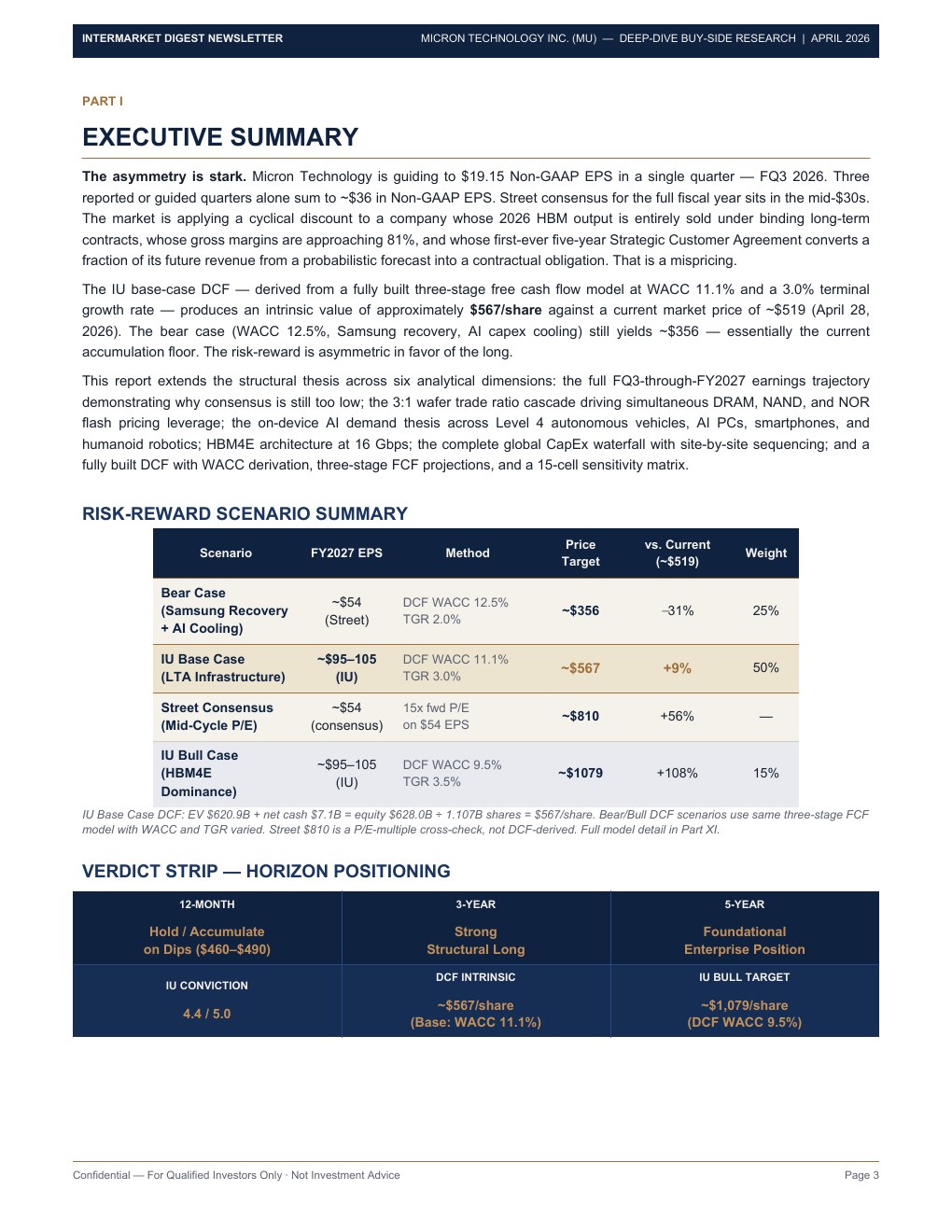

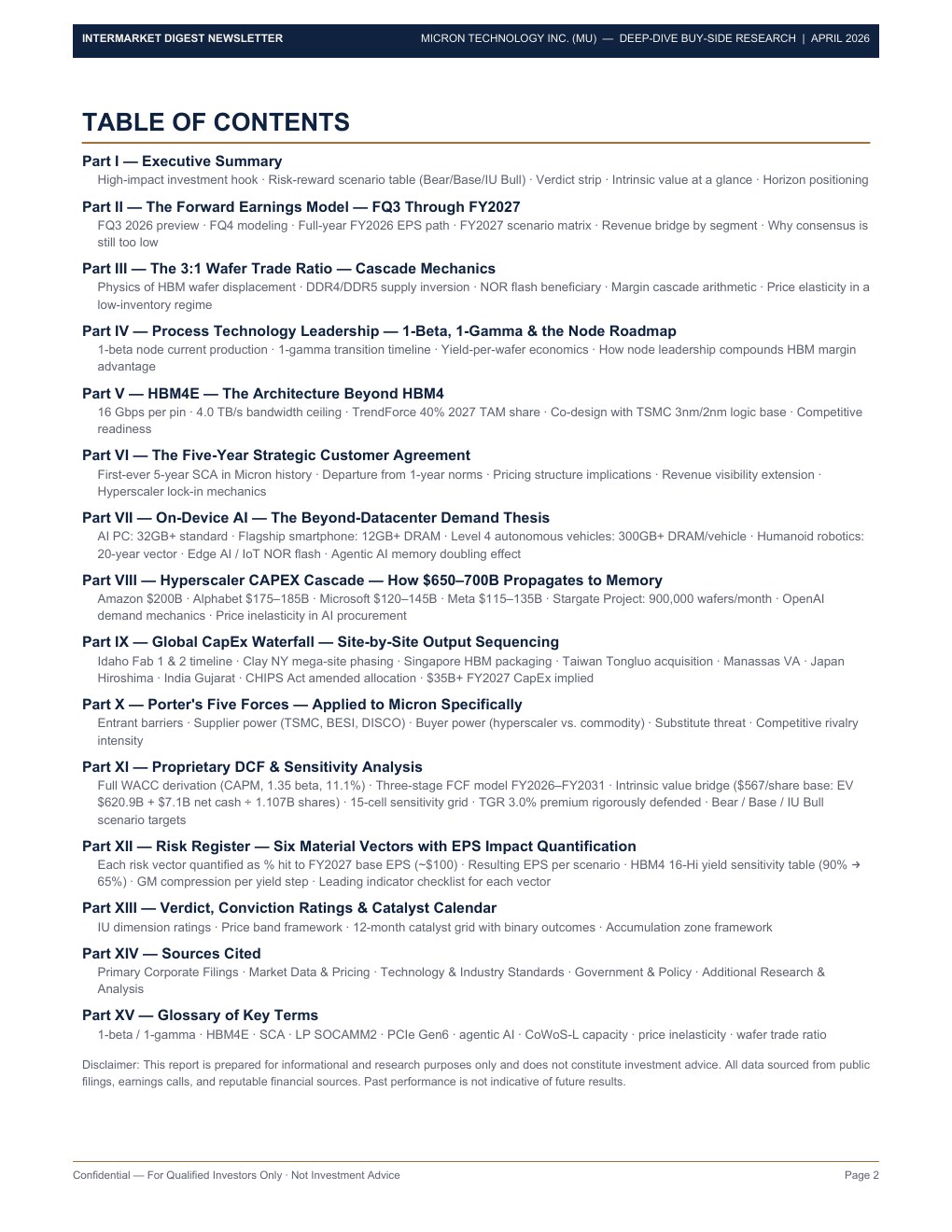

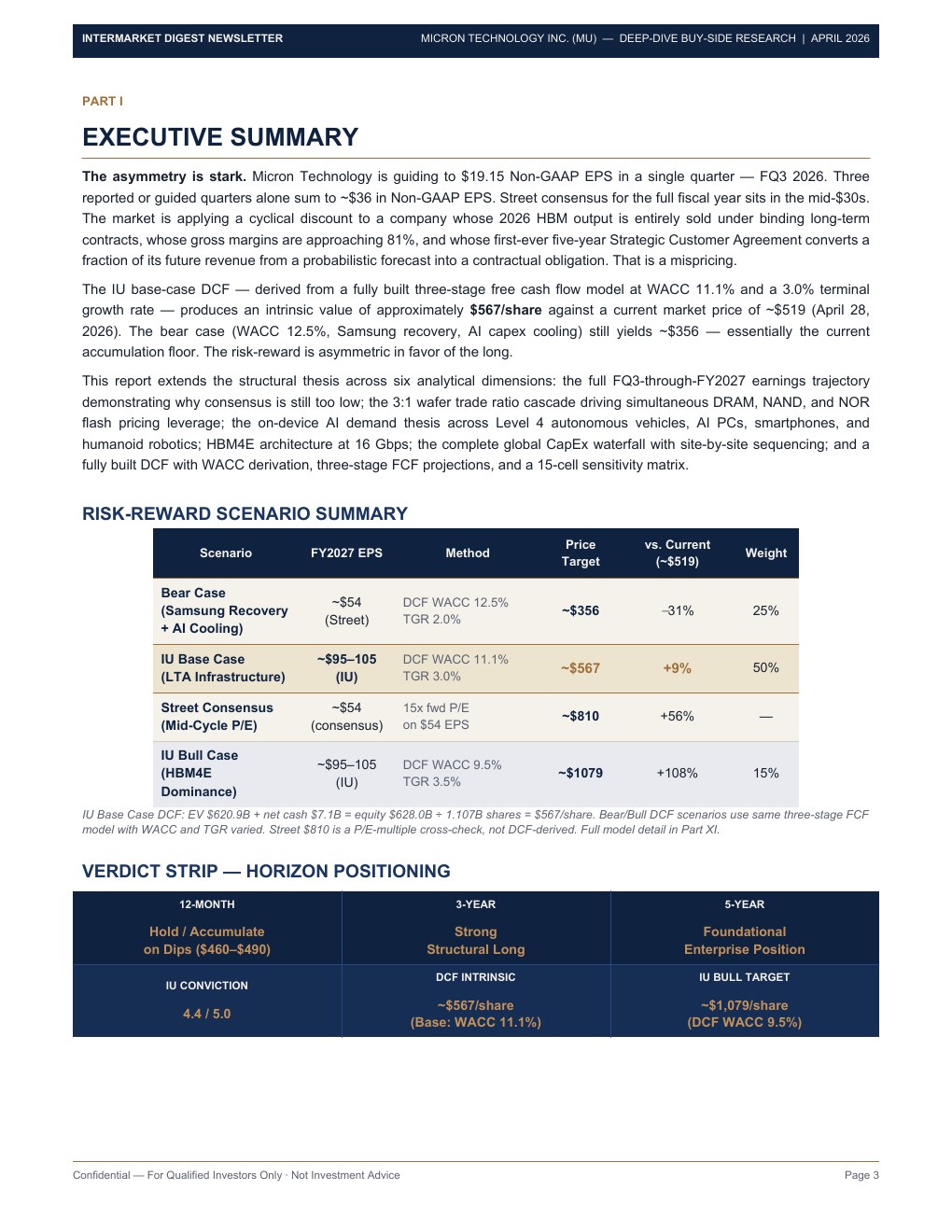

15-part buy-side deep-dive covering the FQ3–FY2027 earnings model, 3:1 wafer trade ratio cascade mechanics, HBM4E architecture at 4.0 TB/s, on-device AI across autonomous vehicles and AI PCs, a proprietary DCF (intrinsic value ~$567/share base), and a 15-cell sensitivity matrix.

This is a premium deep-dive — full proprietary buy-side research, DCF models, and conviction-level analysis. Subscriptions are coming soon. Pricing tiers are being finalized.

Cancel anytime. For research purposes only — not investment advice.

Preview of original formatted report (2.8 MB · 27 pages) — full access for subscribers.

Deep-dive financial intelligence on semiconductors, macro, commodities, and market structure. No noise — just signal.